Reliable AI Agents Need Boundaries, Not More Loops

Sun Mar 08 2026

David Bleeker, Founder

Introduction / Context

The current wave of AI agent work is pushing teams past the easy demo stage. The interesting question is no longer whether a model can call a tool. It is whether the resulting system can be trusted after the third retry, the fifth external dependency failure, and the first time it touches a production system with side effects.

That shows up in public discussions in a predictable way. Hacker News has been full of discussions about agent infrastructure, memory, and safety. Stack Overflow is filled with variants of the same operational pain: iteration limits, broken tool calls, malformed outputs, and unclear failure boundaries. The common theme is that teams are not asking for a clever prompt. They are asking how to keep an agent from becoming an opaque automation hazard.

The practical shift is important. In a prototype, the agent is the feature. In production, the agent is an unreliable subsystem inside a larger application that still needs the normal engineering controls: authorization, observability, rollback, policy enforcement, and cost discipline.

The Question

How do you make AI agents reliable, secure, and auditable enough for production use?

The Answer

The short answer is that you stop treating the model as the application runtime. A production agent works best when the LLM is a bounded decision component inside a deterministic shell.

That shell should own:

- state transitions

- tool allowlists

- authorization checks

- retry policy

- cost budgets

- idempotency

- structured logging

- evaluation hooks

This matters because most agent failures are not model-intelligence failures. They are systems failures:

What breaks in production

The first failure mode is uncontrolled recursion. The agent keeps calling tools because its stopping condition is weak, or because tool outputs are noisy and the planner keeps trying to recover.

The second failure mode is privilege leakage. A generic "tool calling" setup often gives the model far more reach than the user actually has. If the tool contract is not policy-aware, the model can request actions that are syntactically valid but operationally wrong.

The third failure mode is missing auditability. When a user asks "why did the system update this ticket, email this customer, and then open a Jira issue?" teams often discover that the answer is buried across prompt text, model outputs, and downstream API logs with no shared correlation ID.

The fourth failure mode is evaluation drift. A workflow that looked good in a notebook collapses when the task mix changes or upstream APIs get slower. Agent quality is not a single accuracy number. It is a distribution of behaviors under latency, ambiguity, partial failure, and adversarial inputs.

What works better

The strongest production pattern is to split the agent into explicit stages:

- classify the task

- decide whether the task is agentic at all

- generate a bounded plan

- execute tools through a controlled runtime

- require confirmation for high-impact side effects

- record every step for replay and review

That sounds less magical than "let the agent handle it," but it is exactly why it works. Most business tasks do not need open-ended autonomy. They need constrained orchestration with localized model reasoning.

Tradeoffs and implementation risks

The main tradeoff is reduced flexibility. A bounded agent will occasionally refuse tasks a more permissive agent might solve. That is usually a good trade. False negatives cost some convenience. False positives create security incidents, broken records, and impossible debugging sessions.

Another risk is overfitting your controls to the happy path. Teams often cap loop counts and call it done, but loop count alone does not protect against bad decisions. You also need semantic controls: tool scopes, argument validation, replay protection, and action approval gates.

An experienced engineering insight here is that agent reliability is more like workflow reliability than chatbot quality. You should think in terms of SLOs, failure domains, and operational budgets, not just prompt quality.

Architecture / Implementation Guidance

The concrete recommendation is to build an agent runtime with a typed execution graph and a policy layer that sits outside the model.

A practical shape looks like this:

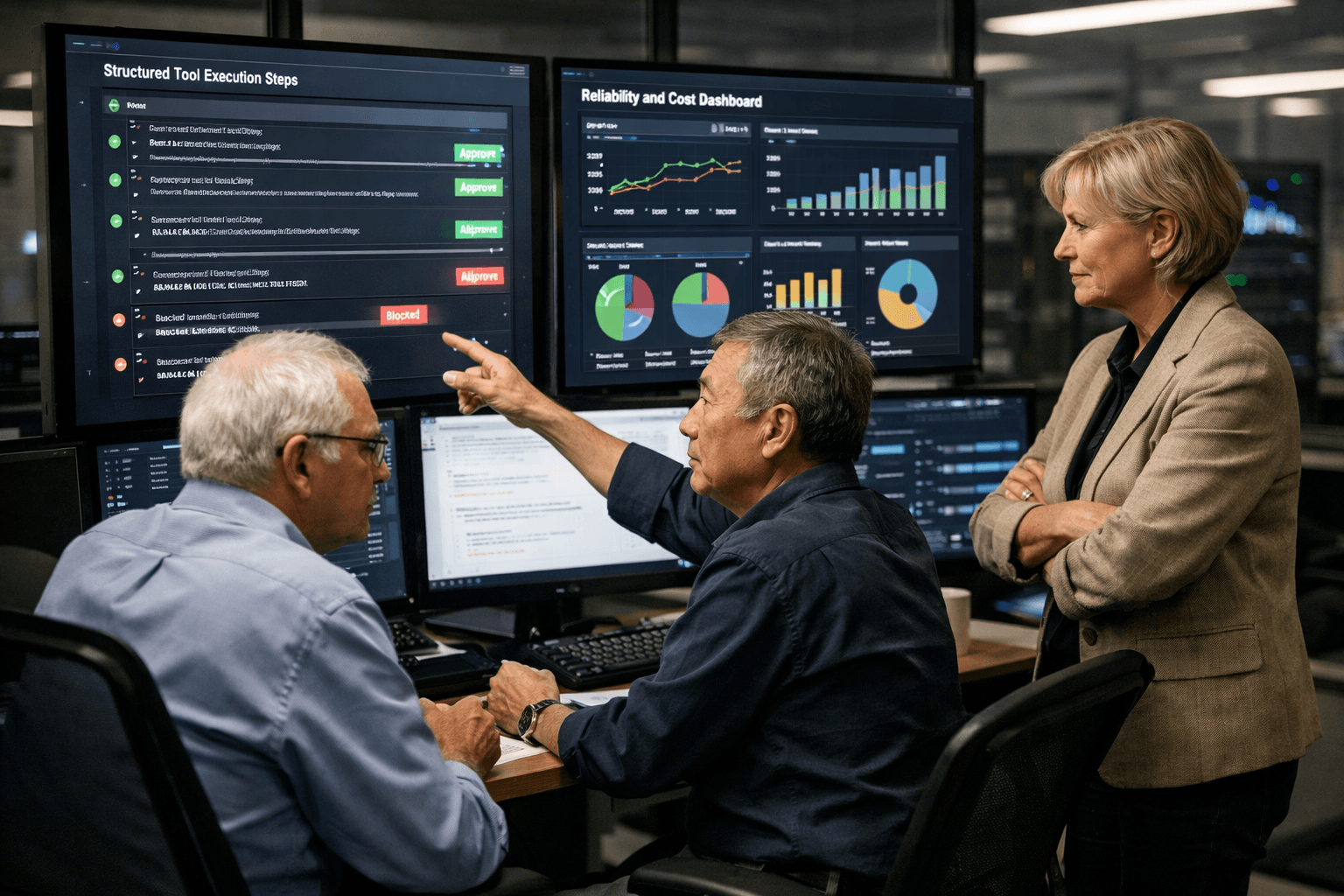

planner: model creates a plan in structured JSONpolicy engine: validates requested tool use against user identity, environment, and task classexecutor: runs the tool with timeouts, retries, and idempotency keysreview gate: blocks destructive actions until explicit approvalevent log: stores prompts, decisions, tool arguments, outputs, costs, and correlation IDsevaluator: scores runs offline using replay data

This lets you keep the model strong where it has leverage and weak where determinism matters.

A good rule is to reserve unconstrained tool use for internal low-risk tasks and require narrower contracts for anything customer-visible or state-changing. For example, "draft a support response" can be agentic. "Issue a refund" should probably require a policy check and a human or deterministic approval layer.

You also want two levels of memory:

- execution memory for the current run

- curated long-term memory that is written through explicit rules

The mistake is letting every observation become memory. That creates contamination and hidden feedback loops. Long-term memory should be closer to a reviewed knowledge store than an append-only transcript.

Code Snippets

type ToolRequest = {

name: 'searchDocs' | 'readTicket' | 'draftReply' | 'updateTicket'

args: Record<string, unknown>

}

type PlannedStep = {

reason: string

tool: ToolRequest

requiresApproval: boolean

}

type AgentPlan = {

goal: string

stopCondition: string

maxSteps: number

steps: PlannedStep[]

}

export async function runAgentPlan(input: {

userId: string

plan: AgentPlan

policy: PolicyEngine

tools: ToolRegistry

log: EventLog

}) {

const executed: Array<{ step: PlannedStep; result: unknown }> = []

for (const [index, step] of input.plan.steps.entries()) {

if (index >= input.plan.maxSteps) {

throw new Error('plan exceeded maxSteps')

}

input.policy.assertAllowed({

userId: input.userId,

tool: step.tool.name,

args: step.tool.args,

})

if (step.requiresApproval) {

await input.log.record('approval_required', step)

break

}

const result = await input.tools.execute(step.tool.name, step.tool.args, {

timeoutMs: 10_000,

idempotencyKey: crypto.randomUUID(),

})

executed.push({ step, result })

await input.log.record('step_executed', { step, result })

}

return executed

}

export class PolicyEngine {

assertAllowed(input: { userId: string; tool: string; args: Record<string, unknown> }) {

if (input.tool === 'updateTicket' && input.args.priority === 'critical') {

throw new Error('critical ticket updates require human approval')

}

if (input.tool === 'draftReply' && typeof input.args.channel !== 'string') {

throw new Error('draftReply requires a channel')

}

}

}

The important detail is not the class names. It is the control boundary. The model proposes; the runtime decides.

Key Takeaways

- Production agents should be treated as bounded workflow components, not autonomous runtimes.

- The most important controls are policy enforcement, approval gates, idempotency, and observability.

- Reliability improves when you split planning from execution and log both.

- Memory should be curated, not automatic.

- If a task can cause side effects, the runtime must own the final decision.